In February 2023, just over a year after the public launch of OpenAI’s revolutionary generative AI chatbot, ChatGPT, a stark illustration of its burgeoning ethical complexities unfolded at Vanderbilt University. The institution, a prestigious private research university in Nashville, Tennessee, found itself embroiled in controversy following an email sent to its entire student body. This message was intended as a gesture of support and reflection in the wake of a deeply tragic and unsettling event: a fatal campus shooting at Michigan State University that had left three students dead and five others injured, shattering the sense of security on university campuses nationwide.

The Michigan State University shooting, which occurred on February 13, 2023, sent shockwaves through the academic community and beyond. It was a stark reminder of the pervasive issue of gun violence and its devastating impact on educational environments. Universities across the country responded with statements of solidarity, offers of counseling, and renewed commitments to campus safety. Vanderbilt’s administration aimed to do the same, crafting a message that, in part, read: “The recent Michigan shootings are a tragic reminder of the importance of taking care of each other.” However, it was a subtle, almost hidden, detail at the very bottom of the email that ignited an immediate and furious backlash: a disclaimer in tiny type stating, “paraphrased from OpenAI’s ChatGPT.”

The revelation that a message addressing such a profound tragedy – one calling for community, empathy, and mutual care – had been at least partially generated by an artificial intelligence program struck a raw nerve among students and faculty alike. The response was swift and overwhelmingly negative. Students articulated their outrage across social media and campus forums. As one senior powerfully articulated, capturing the prevailing sentiment, “There is a sick and twisted irony to making a computer write your message about community and togetherness because you can’t be bothered to reflect on it yourself.” This criticism underscored a deep-seated discomfort with the perceived outsourcing of genuine human emotion and critical reflection, especially in a moment demanding profound empathy and authentic leadership. The use of AI, even for a paraphrased portion, was seen not as efficiency, but as an abdication of responsibility, a failure to engage meaningfully with a shared trauma.

The university’s administration quickly moved to quell the burgeoning scandal. A swift apology email followed, acknowledging the misstep and the hurt it had caused. Beyond the immediate apology, Vanderbilt launched a formal professionalism and ethics investigation into the incident, signaling the gravity with which it viewed the breach of trust. An associate dean attempted to contextualize the error, couching the misstep as a consequence of "learning pains" associated with the rapid adoption of new and disruptive technologies like AI. This explanation, while perhaps offering a glimpse into the internal struggles of institutions grappling with AI integration, did little to soothe the immediate anger, which stemmed less from a misunderstanding of technology and more from a perceived lapse in human judgment and ethical leadership.

This incident at Vanderbilt, though specific, quickly transcended its immediate context, becoming a potent symbol of the broader ethical quandaries unleashed by generative AI. Chatbots like ChatGPT have, in a remarkably short span, spawned a host of ethical questions that permeate various sectors: from academic integrity in the classroom (for both teachers and students) to intellectual property rights for authors and creative professionals. The ease with which AI can generate text, images, and even code challenges traditional notions of authorship, originality, and the very essence of intellectual work.

However, the core debate underlying the Vanderbilt incident is far from novel. In fact, similar discussions about the ethics and implications of "ghostwriting" – the practice of one person creating content that is attributed to another – have been taking place for well over a century. This historical perspective reveals a persistent, almost primal, discomfort with the idea that the words we read, often imbued with authority, emotion, or insight, might not genuinely belong to the person whose name is attached to them. The rise of AI simply recontextualizes and amplifies an ancient anxiety about authenticity and attribution.

Outsourcing Authorship: A Century of Ghostwriting

Ghostwriting, defined as a paid arrangement in which one individual writes material that is published under another person’s name, has been a discreet yet integral part of various industries for over a hundred years. The term itself, "ghostwriter," appears to have entered the English lexicon in a 1908 newspaper article. Discovered during research for a forthcoming book, “Ghostwriting: A Secret History, from God to A.I.,” this early reference appeared in the Daily Star of Lincoln, Nebraska. The story detailed an anonymous writer who had been paid a substantial sum of $5,000 – an extraordinary amount for the time – to assist a high-society woman in penning her book. This inaugural mention already hinted at the commercial, often clandestine, nature of the practice, where credit and compensation were distinctly separated.

Today, ghostwriting is a sophisticated and widespread industry. It typically involves collaborations between highly skilled professional writers and individuals who, for various reasons, lack the time, the necessary writing skills, or the industry connections to produce a book or significant piece of writing themselves. These clients often include celebrities, politicians, business leaders, or experts in niche fields who possess valuable knowledge or experiences but require professional assistance to articulate them effectively for a broader audience. The scope of ghostwriting is vast, encompassing memoirs, business manifestos, political speeches, articles, and even academic papers (though the latter often resides in a more ethically contentious zone).

Upon the publication of a manuscript, the ghostwriter’s contribution is typically acknowledged, albeit often obliquely. They might be identified as a "friend," "collaborator," "research assistant," or "consultant" in the acknowledgments section, a subtle nod to their role without granting full authorship. In some cases, particularly in co-authored works or projects where the ghostwriter’s reputation adds value, their name may appear alongside the credited author’s on the cover, usually with a designation like "with" or "and." Regardless of the specific crediting format, the fundamental premise remains: the client assumes full ownership and public attribution for the ghostwriter’s work, embodying the persona and voice crafted by another.

An Enduring Ethical Gray Area

Despite its prevalence and professionalization, ghostwriting continues to inhabit an ethical gray area, frequently perceived with suspicion and unease. This lingering discomfort is evident in how the practice is often categorized. When searching for "the practice of one person writing in another person’s name" on Google, the immediate results do not prominently feature "ghostwriting." Instead, terms like "pseudonym" or "alias" appear first, followed closely by more ethically charged concepts such as "plagiarism," "libel," and "slander." This linguistic association underscores a public perception that links ghostwriting, even when consensual and compensated, to deceptive practices.

Historically, this ethical ambiguity was even more pronounced. A 1953 article titled “Ghost Writing and History,” published in The American Scholar, noted that in the mid-20th century, the terms "forgery" – defined as falsely imitating another’s work with the intent to deceive – and "ghostwriting" were sometimes used interchangeably by scholars. This conflation highlights a deep-seated anxiety about the authenticity of authorship and the potential for manipulation when the true creator remains unseen.

The pervasive unease stems from the implicit promise of authenticity that comes with a byline. Readers often expect the words on a page to be a direct emanation of the intellect, experience, and emotional landscape of the credited author. When this expectation is subtly undermined by the knowledge of a ghostwriter, it can provoke feelings of betrayal or disappointment. This is why many clients still opt to obscure the fact that they’ve used a ghostwriter, and why public responses to ghostwritten works often reflect significant uneasiness.

Recent examples vividly illustrate this point. The 2023 debut novel by actress Millie Bobby Brown, Nineteen Steps, which she co-wrote with a ghostwriter, sparked a heated debate on social media. One widely shared post encapsulated the criticism: “You should be ashamed. [The ghostwriter’s] name should be on the cover. She was the one who actually wrote the book.” The outrage here is not about the legality of the arrangement, but the perceived moral impropriety of claiming another’s creative output as one’s own without prominent acknowledgment. Similarly, the internal conflict experienced by ghostwriting clients is palpable. An anonymous poster on Reddit admitted, “I feel so guilty and ashamed whenever I use a ghostwriter now because I feel people will think I’m lying.” Both the public criticism and the self-flagellation imply that the act of claiming another person’s words, even when paid for and factually accurate, can render those words deceitful in the eyes of the public and, sometimes, the author themselves.

Ghostwriting agencies and advocates often rush to defuse these worries, employing various strategies to normalize the practice. The Association of Ghostwriters, for instance, reassures its clients that ghostwriting “has been around forever,” framing it as a long-standing and accepted part of literary production. Other proponents argue that ghostwriting is fundamentally consensual and collaborative, emphasizing that it is not a lazy, deceptive, or unethical form of “selling out.” They highlight the symbiotic relationship between client and writer, where the ghostwriter helps to extract, structure, and articulate the client’s ideas and experiences.

Yet, even celebrated figures who openly use ghostwriters sometimes betray a hint of defensiveness or misgiving. In the concluding chapter of her ghostwritten book, iconic actress Whoopi Goldberg acknowledged her initial struggle: “I meant to try (to write the book myself),” she wrote, “And when it turned out I couldn’t quite pull it off… I looked for help.” Goldberg then framed her reliance on ghostwriting as something she deserved after overcoming numerous obstacles as a Black woman, subtly justifying the assistance. This framing, while personal, also implicitly acknowledges the societal pressure to be the sole author of one’s work.

Moreover, the financial realities of high-end ghostwriting underscore its exclusivity. Professional ghostwriters can command fees in the mid-six figures for their services. Prince Harry’s memoir, Spare, for example, reportedly saw its ghostwriter, J.R. Moehringer, receive a staggering $1 million advance. This economic barrier has historically meant that sophisticated ghostwriting services were primarily accessible to the wealthy and well-connected.

This is where the emergence of chatbots like ChatGPT fundamentally alters the landscape. Generative AI promises to democratize "ghostwriting" – to be the ghostwriter for the masses. The cost barrier, which previously limited professional writing assistance to an elite few, is dramatically lowered, if not entirely removed, for basic text generation. This seismic shift has led industry veterans, like ghostwriter Josh Lisec, to predict that traditional human ghostwriting will need to reposition itself as a "boutique service for elites" if it is to survive and differentiate itself in an AI-saturated market.

Naming Names: Acknowledgment, Responsibility, and the Threshold of Authenticity

The question of whether "assistance" or "collaboration" on intellectual and artistic work is ethical is not a simple yes or no. For centuries, various forms of assistance have been accepted as legitimate parts of the creative process. Editors have long made careers out of helping authors refine, shape, and improve their writing without claiming authorship. Visual artists, from the Renaissance masters to modern-day sculptors, have employed studio assistants to execute portions of their work or prepare materials. Television shows are almost exclusively written collaboratively in writers’ rooms, where collective brainstorming and drafting are the norm. The key distinction, then, lies in the degree of assistance and, critically, how that assistance is acknowledged.

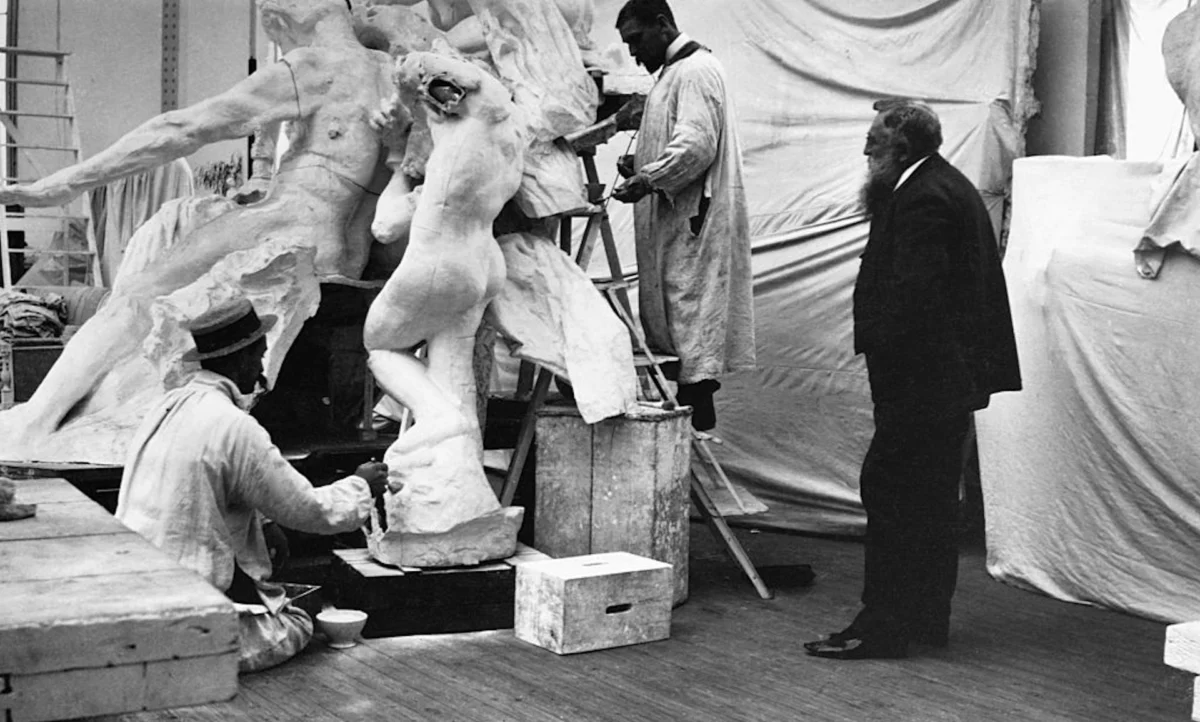

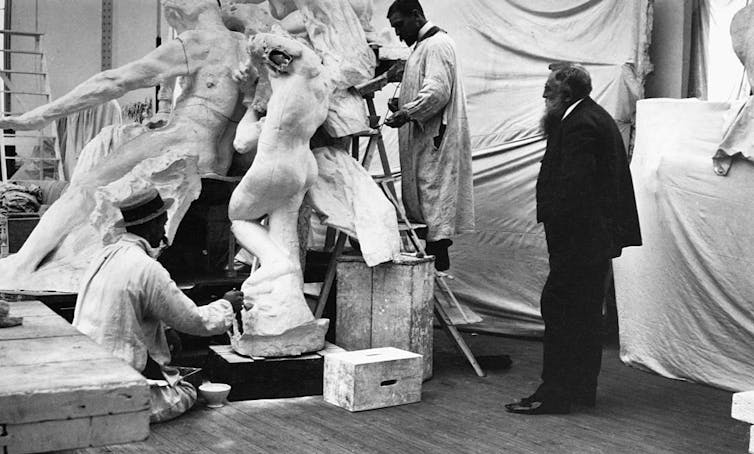

A fascinating historical parallel can be drawn from the late 19th century. A renowned sculptor found himself in court, forced to rebut claims that his assistant – whom the press derisively labeled a “ghost” – had actually completed sculptures for which the master took sole credit. The judge, in a landmark decision, ruled that an artist could ethically accept a certain amount of "mechanical assistance." However, he crucially added that there was a discernible threshold where artistic assistance became “dishonest.” To resolve the dispute, the judge compelled the accused sculptor to craft a bust in real time in the courtroom, an impromptu test of his professed skill and a demonstration of the public’s desire for direct evidence of authorship.

This historical precedent offers valuable insight into contemporary debates surrounding AI in education. Most educators find it far more ethical when students turn to ChatGPT for editing assistance – polishing grammar, refining sentence structure, or brainstorming ideas – than when they use it to generate an entire document from scratch. The former is seen as a tool for improvement; the latter, a bypass of the learning process and a form of intellectual dishonesty. Consequently, many universities, including the University of Southern California (USC), now permit the use of AI as a tool, but with stringent requirements: users must verify the accuracy of AI-generated content and, crucially, disclose its use.

USC’s policy on generative AI clearly states: “You should never attempt to present… content created by others, including generative AI, as your own.” This policy explicitly equates unacknowledged AI-generated text with plagiarism, drawing a direct line between the ethical concerns of traditional ghostwriting and the challenges posed by new AI tools.

This principle of responsibility and originality also permeates professional ghostwriting contracts. Ghostwriters are typically required to sign a “warranty of originality,” a legal promise to the author that the work they deliver is original, free from plagiarism, and factually accurate. They often employ sophisticated plagiarism and fact-checking platforms, such as iThenticate, to ensure compliance. When inaccuracies or instances of plagiarism do surface in ghostwritten works, it is frequently the ghostwriter who is held accountable, even if the credited author bears the public shame. Former Department of Homeland Security Secretary Kristi Noem, for instance, blamed her ghostwriter for including a false anecdote about meeting North Korean dictator Kim Jong Un in her memoir. Similarly, physician David Agus, a professor at the University of Southern California Keck School of Medicine, attributed numerous instances of plagiarism identified in his popular science books to his ghostwriter.

In both traditional ghostwriting and the emerging landscape of AI, the provision of assistance is not inherently unethical, provided it is transparently acknowledged and falls within acceptable thresholds of collaboration. Ghostwriters willingly provide assistance and accept professional responsibility for the originality and accuracy of their work. Scholars are increasingly granted permission to use generative AI, provided they properly cite its use and ensure its veracity.

And yet, as the Vanderbilt incident starkly demonstrated, when administrators openly advertised that their message of condolence had been written with the assistance of ChatGPT, students and faculty pushed back with visceral indignation. University policies and meticulously crafted book contracts may offer veils of legitimacy and shields from legal liability, but they cannot entirely override a fundamental human expectation. In the end, readers, whether consuming a campus-wide email or a bestselling memoir, still seem to want the words they’re reading to emanate authentically from the mind of the person whose name is on the byline. The debate continues, perpetually redefined by new technologies, yet rooted in an ancient human desire for truth and authenticity in expression.

Emily Hodgson Anderson, Professor of English and Dean of Undergraduate Education, USC Dornsife College of Letters, Arts and Sciences

This article is republished from The Conversation under a Creative Commons license. Read the original article.