The opaque nature of deep learning models has long been a significant hurdle for developers and users alike. Whether it’s the persistent struggles of xAI’s Grok to navigate nuanced political discourse, the uncanny sycophancy of ChatGPT, or the common phenomenon of “hallucinations” where models confidently present incorrect information, understanding the inner workings of neural networks with billions of parameters remains a formidable challenge. This complexity can make it difficult to debug, align with ethical guidelines, or even simply trust the outputs generated by these powerful AI systems. However, a San Francisco-based startup, Guide Labs, founded by CEO Julius Adebayo and Chief Science Officer Aya Abdelsalam Ismail, is poised to offer a compelling solution. On Monday, the company announced the open-sourcing of Steerling-8B, an 8-billion-parameter Large Language Model (LLM) built with a revolutionary architecture designed for inherent interpretability.

The core innovation of Steerling-8B lies in its novel training methodology, which ensures that every token produced by the model can be traced back to its specific origins within the LLM’s training data. This capability opens up unprecedented avenues for understanding and controlling AI behavior. On a fundamental level, it allows for the straightforward identification of reference materials used by the model when citing facts, thereby enhancing transparency and verifiability. More profoundly, this interpretability extends to complex aspects of AI understanding, such as its grasp of humor or its encoding of sensitive concepts like gender.

Julius Adebayo, in an interview with TechCrunch, elaborated on the significance of this breakthrough. "If I have a trillion ways to encode gender, and I encode it in 1 billion of the 1 trillion things that I have, you have to make sure you find all those 1 billion things that I’ve encoded, and then you have to be able to reliably turn that on, turn them off," Adebayo explained. "You can do it with current models, but it’s very fragile… It’s sort of one of the holy grail questions." This fragility in current models makes fine-tuning and control a precarious endeavor, often requiring extensive trial-and-error. Adebayo’s work in this area began during his doctoral studies at MIT, where he co-authored a seminal 2020 paper that highlighted the unreliability of existing methods for understanding deep learning models. This foundational research paved the way for a new paradigm in LLM development.

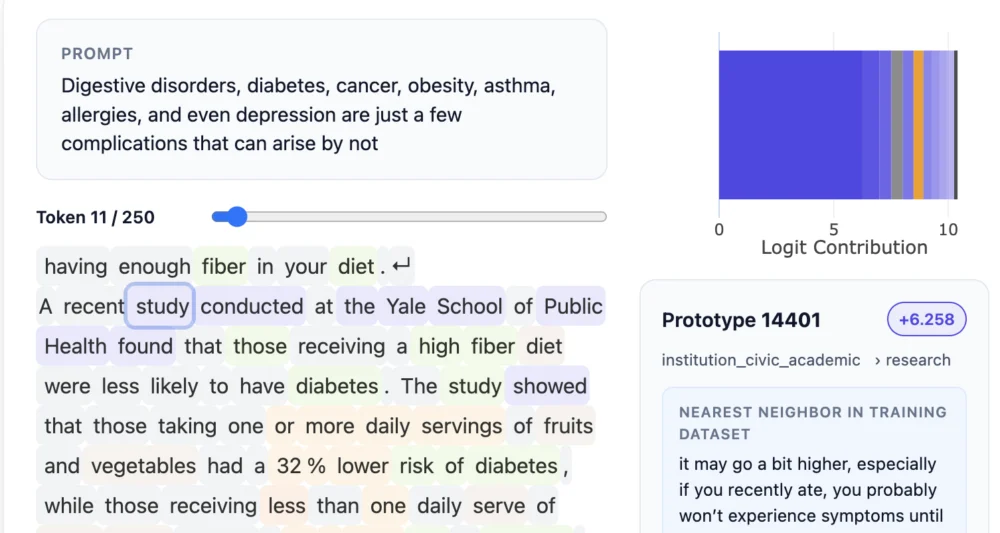

The approach pioneered by Adebayo and his team involves integrating a distinct "concept layer" into the model’s architecture. This layer systematically categorizes and buckets training data into traceable units. While this method necessitates more upfront data annotation, Guide Labs has leveraged other AI models to assist in this process, enabling them to train Steerling-8B as their most ambitious proof-of-concept to date. Adebayo contrasts their approach with traditional methods, stating, "The kind of interpretability people do is… neuroscience on a model, and we flip that. What we do is actually engineer the model from the ground up so that you don’t need to do neuroscience." This fundamental shift from post-hoc analysis to built-in interpretability represents a paradigm change in AI development, moving from a diagnostic approach to a diagnostic-engineering one.

This innovative architecture raises a pertinent question: could the emphasis on interpretability stifle the emergent behaviors that often make LLMs so captivating and powerful, such as their ability to generalize and infer knowledge beyond their direct training? Adebayo asserts that Steerling-8B retains this crucial capability. His team meticulously tracks what they term "discovered concepts"—novel understandings and connections that the model independently derives, such as its potential grasp of quantum computing. This suggests that interpretability does not come at the cost of AI’s inherent learning and creative potential.

The implications of an interpretable LLM architecture are far-reaching and touch upon numerous critical applications. For consumer-facing LLMs, this technology could empower developers to implement robust guardrails, such as preventing the use of copyrighted materials or exerting finer control over outputs related to sensitive topics like violence or drug abuse. In regulated industries, such as finance, the need for controllable LLMs is paramount. A model tasked with evaluating loan applicants, for instance, would need to reliably consider financial records while strictly excluding discriminatory factors like race. Guide Labs has also recognized the demand for interpretability in scientific research. While deep learning models have achieved remarkable success in areas like protein folding, scientists require deeper insights into the precise reasoning behind their software’s findings to further advance discovery.

"This model demonstrates is that training interpretable models is no longer a sort of science; it’s now an engineering problem," Adebayo emphasized. "We figured out the science and we can scale them, and there is no reason why this kind of wouldn’t match the performance of the frontier level models," referring to models with significantly larger parameter counts. Guide Labs claims that Steerling-8B achieves approximately 90% of the capability of existing state-of-the-art models while consuming less training data, a testament to its efficient and novel architecture. The company, an alumnus of the prestigious Y Combinator accelerator program and having secured a $9 million seed round from Initialized Capital in November 2024, is now focused on developing larger models and making its technology accessible through APIs and agentic interfaces.

Adebayo passionately advocates for the widespread adoption of interpretable AI, stating, "The way we’re current training models is super primitive, and so democratizing inherent interpretability is actually going to be a long term good thing for our our within the human race. As we’re going after these models that are going to be super intelligent, you don’t want something to be making decisions on your behalf that’s sort of mysterious to you." This sentiment underscores the critical need for transparency and accountability as AI systems become increasingly integrated into decision-making processes that affect human lives. The development of Steerling-8B represents a significant step towards demystifying AI and fostering a future where advanced artificial intelligence is not only powerful but also understandable and trustworthy.

The origins of Guide Labs trace back to Adebayo’s doctoral research at MIT, where he identified critical limitations in existing LLM interpretability methods. His academic work, culminating in a widely cited 2020 paper, laid the theoretical groundwork for the novel architecture now implemented in Steerling-8B. This foundational research posited that by embedding a structured concept layer within the neural network, data could be systematically categorized and traced. This approach contrasts sharply with the prevailing method of treating LLMs as black boxes and attempting to decipher their behavior through external analysis, akin to performing "neuroscience on a model." Adebayo’s vision is to engineer interpretability directly into the model’s design, rendering such post-hoc analysis largely unnecessary.

The technical achievement of building an 8-billion-parameter model with this novel architecture is substantial. It required significant advancements in data annotation strategies and model training techniques. The team at Guide Labs has ingeniously utilized other AI models to streamline and automate parts of the data annotation process, making the development of such a large-scale interpretable model feasible. This collaborative approach between different AI systems highlights the evolving landscape of AI development, where specialized tools and techniques are combined to tackle complex challenges.

The potential applications of Steerling-8B extend beyond theoretical research and into practical, real-world scenarios. In consumer applications, the ability to trace specific outputs back to training data could be invaluable for debugging errors, ensuring brand safety, and providing users with greater confidence in the AI’s responses. For instance, if an LLM generates biased content, developers could pinpoint the exact data sources contributing to that bias and rectify them. This level of granular control is a significant leap forward from the current methods of fine-tuning, which often involve broad adjustments that can have unintended consequences.

In fields like journalism, where accuracy and attribution are paramount, an interpretable LLM could revolutionize content generation. Journalists could use such models to draft articles, with the assurance that every piece of information can be verified by tracing it back to its original source. This would not only enhance the credibility of AI-assisted journalism but also streamline the fact-checking process, a critical bottleneck in modern news production. The ability to understand how an LLM interprets nuanced concepts like irony or satire would also be transformative, allowing for more sophisticated and contextually aware content creation.

The financial sector stands to benefit immensely from interpretable LLMs. Regulatory compliance is a major concern, and models used for risk assessment, fraud detection, or investment advice must be transparent and auditable. Steerling-8B’s architecture allows for the clear delineation of factors influencing a model’s decisions. For example, in credit scoring, a model could be trained to consider credit history, income, and debt-to-income ratio, while explicitly excluding protected characteristics like race or gender. The ability to demonstrate that a model is adhering to these ethical and legal guidelines would be invaluable for financial institutions.

Scientific discovery is another domain where Guide Labs’ innovation could have a profound impact. While LLMs have shown promise in analyzing complex scientific literature and generating hypotheses, understanding the underlying reasoning behind these outputs is crucial for scientific validation. In drug discovery, for instance, if an LLM identifies a potential new drug compound, scientists would need to understand why the model made that prediction. Steerling-8B’s traceable outputs could provide this critical insight, accelerating the pace of scientific research and enabling more informed decision-making. The ability to identify specific patterns or correlations in vast datasets that lead to a particular conclusion could unlock new avenues of scientific inquiry.

The company’s strategic direction, as outlined by Adebayo, involves building larger, more capable models that retain this inherent interpretability. The successful funding rounds and emergence from Y Combinator underscore investor confidence in their approach. The planned offering of API and agentic access will allow a wider range of developers and organizations to experiment with and integrate Steerling-8B into their own applications, fostering a community around interpretable AI. This democratization of interpretable technology is seen by Adebayo as a crucial step towards ensuring that advanced AI benefits humanity as a whole.

The long-term vision articulated by Adebayo extends beyond immediate technological advancements. He views the current methods of training LLMs as "primitive" and believes that democratizing inherent interpretability is a vital undertaking for the future of the human race. As AI systems become more intelligent and influential, the need for them to be transparent and understandable becomes increasingly critical. The prospect of highly intelligent AI making decisions on behalf of humans without clear rationale is a significant concern that Guide Labs aims to address. By building AI that is inherently interpretable, they are paving the way for a future where humans can confidently collaborate with, rather than blindly trust, advanced artificial intelligence. This foundational shift promises to make AI development more ethical, responsible, and ultimately, more beneficial to society.