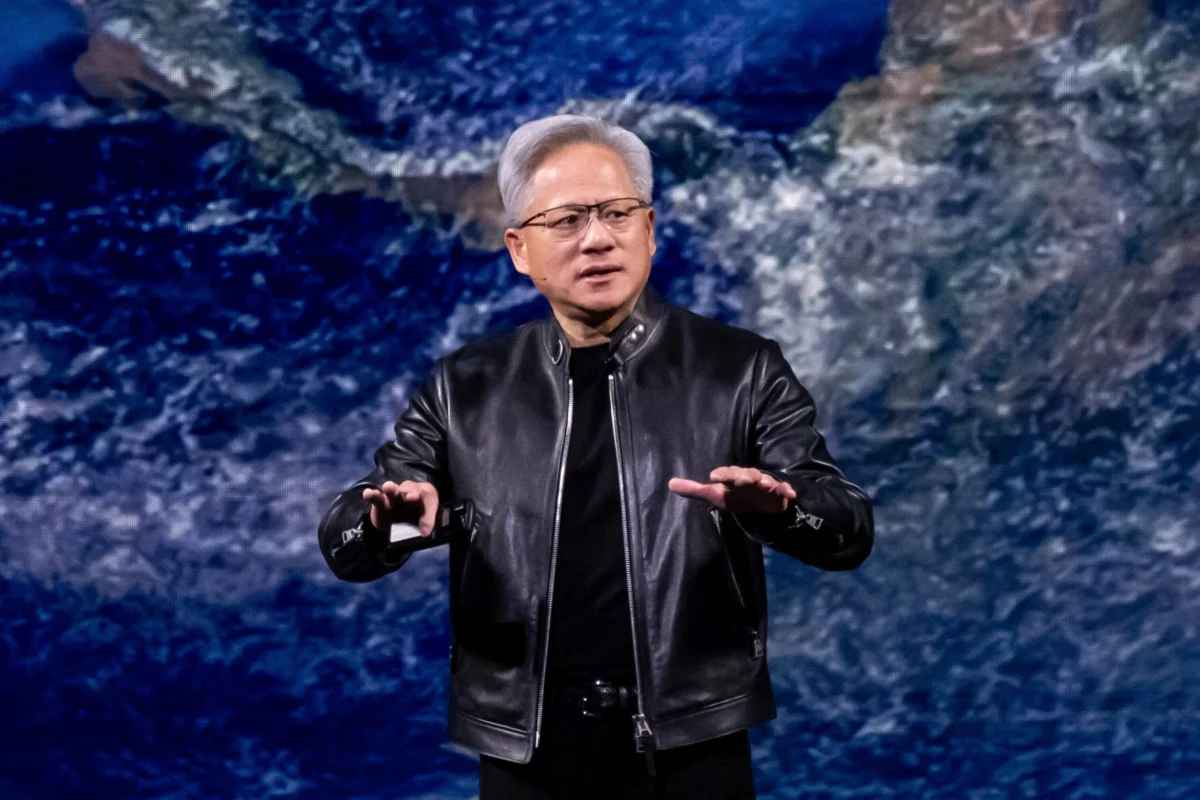

Just a few short years ago, Artificial Intelligence was largely perceived as a nascent, often whimsical technology, primarily recognized for generating uncanny, sometimes humorous, and frequently imprecise photos and videos that proliferated across social media feeds. Its capabilities felt more like a parlor trick than a foundational shift. Today, however, AI has transcended its novelty phase to become a seemingly ubiquitous and indispensable force, permeating nearly every facet of industry and daily life. New, increasingly sophisticated models are emerging at a dizzying pace, often on a monthly basis, pushing the boundaries of what was once considered possible. This rapid evolution has led to significant AI integration in diverse sectors, from the creative depths of Hollywood, where its potential impact on filmmaking and content creation is a subject of intense debate, to the operational core of corporate offices worldwide. Even if its immediate effect hasn’t universally translated into a tangible boost in individual employee productivity, particularly regarding mundane tasks like email management, AI’s presence in the workplace is now undeniable. This sprawling and relentless expansion demands an equally colossal investment in infrastructure, forming the very backbone of the AI revolution. At the forefront of delivering these critical building blocks is Nvidia, and its visionary CEO, Jensen Huang, anticipates an unprecedented surge in demand for these foundational technologies.

During his highly anticipated keynote address on Monday at Nvidia’s annual GTC conference in San Jose, Huang unveiled a staggering projection that underscored the relentless acceleration of the AI era. He declared that Nvidia had effectively doubled its internal demand forecast for the next year alone. Looking further ahead, Huang stated with conviction, "I see through 2027 at least $1 trillion [in AI infrastructure demand]." He immediately qualified this immense figure, adding, "In fact, we are going to be short. I am certain computing demand will be much higher than that." This bold prediction from the head of the company widely regarded as the foundational supplier for the AI industry sends a powerful signal about the sustained, exponential growth expected in the sector.

Recognizing the monumental scale of this impending demand, Huang is already proactively strategizing for this future reality. His plans include an innovative and somewhat unconventional incentive designed to attract and retain top engineering talent, while simultaneously maximizing the computational output of his workforce: offering engineers "AI tokens" worth nearly half their base salary. This novel compensation model highlights the critical role of computational access in the modern AI development landscape.

The AI boom is indeed pushing infrastructure investments to stratospheric new heights. Across the tech industry, companies are collectively pouring a staggering $700 billion into the global data center buildout. To put this sum into perspective, it rivals the entire Gross Domestic Product (GDP) of developed economies such as Sweden. Furthermore, it represents more than double the total inflation-adjusted cost of the iconic Apollo missions – humanity’s monumental endeavor to send humans to the moon. This comparison starkly illustrates the sheer magnitude of resources currently being allocated to constructing the digital foundations for AI. Nvidia stands as a critical and virtually indispensable supplier in this unprecedented buildout, providing the high-performance Graphics Processing Units (GPUs) that serve as the fundamental processors powering these "AI factories." Huang’s $1 trillion demand figure is further compelling evidence that this infrastructure expansion is operating at full throttle, with no signs of deceleration. This dominance persists even as formidable competitors like Advanced Micro Devices (AMD), led by CEO Lisa Su, continue to vigorously pursue strategies to close the technological and market share gap, despite occasional struggles in securing massive deals on par with Nvidia’s.

This aggressive expansion and bullish outlook from Nvidia come amidst lingering concerns about the potential for an "AI bubble," a sentiment flagged by prominent business leaders and financial analysts. Microsoft CEO Satya Nadella has cautioned against speculative excesses, urging a focus on real-world applications and value creation rather than hype. Similarly, renowned "Big Short" investor Michael Burry, famous for predicting the 2008 financial crisis, has voiced skepticism, suggesting that some valuations in the AI sector may be inflated and unsustainable. These concerns often stem from historical parallels with past tech bubbles, where rapid growth outpaced tangible profitability and practical deployment. However, proponents of the AI revolution argue that the underlying technology represents a fundamental, transformative shift with broad applicability across all industries, justifying the massive investments as a necessary foundation for future economic growth and innovation.

Huang’s keynote at GTC was not just about hardware and financial projections; it also delved into the more speculative, futuristic implications of AI. He made provocative claims that AI agents could soon become sophisticated enough to effectively "run the world," raising profound questions about autonomy, governance, and the future role of human decision-making. These assertions ignite ongoing debates within the AI community and wider society regarding ethical frameworks, control mechanisms, and the potential societal impact of highly autonomous AI systems. Furthermore, Huang announced ambitious plans around "space-based computing," a concept designed to launch AI processing capabilities into orbit. This innovative approach is partly driven by the immense energy demands of expanding terrestrial data centers, a challenge that visionary entrepreneurs like Elon Musk have also spotlighted as requiring novel solutions. Deploying computing resources in space could potentially offer advantages in terms of cooling efficiency, access to renewable energy (solar), and strategic data processing locations, albeit introducing significant engineering and logistical complexities.

"We are completely resetting and starting the largest buildout of human history," Huang declared, encapsulating the monumental scope of the current technological shift. "Most of the world’s industries building AI factories, building chip plants, building computer plants are represented here today." His words resonate with the palpable excitement and intense investment seen across the global tech landscape. Adding substantial credibility to Huang’s bold claims, Nvidia’s recent earnings reports have painted a picture of extraordinary financial performance. Last month, the company posted a staggering $215.9 billion in revenue for fiscal year 2026, marking a monumental 65% increase from the previous year and representing the highest annual result in its history. Crucially, its data center revenue alone soared by an impressive 75% year-over-year, reaching $62.3 billion. These figures not only validate Nvidia’s current market leadership but also underscore the explosive demand for the core components that power the AI revolution.

AI Tokens: The Future of Compensation and Amplified Productivity?

As business leaders universally strive to harness AI’s potential to dramatically boost worker productivity, Huang offered a fascinating glimpse into how Nvidia intends to operationalize this ambition internally: by compensating engineers with "tokens"—a unique form of currency within the AI ecosystem—to dramatically amplify their output. This novel approach reflects a deep understanding of the fundamental resources required for cutting-edge AI development.

"I could totally imagine in the future every single engineer in our company will need an annual token budget," Huang envisioned. He elaborated on the potential scale of this incentive, stating, "They’re going to make a few hundred thousand a year as their base pay. I’m going to give them probably half of that on top of it as tokens so that they could be amplified 10 times." This isn’t merely a bonus; it’s a strategic investment in computational access, directly linking an engineer’s potential output to their access to AI processing power.

In the context of AI models, "tokens" are the basic units of data or words that these sophisticated models utilize to process language, recognize patterns, and generate responses. They are, in essence, the computational currency that drives AI deployment. For instance, AI company OpenAI estimates that one token is roughly equivalent to four characters, meaning a typical one-to-two sentence prompt might consume around 30 tokens. The phrase "Fortune Magazine," for example, might be broken down into five distinct tokens: "For," "tune," "Mag," "az," and "ine." By Huang’s described allowance levels, engineers would gain access to billions of tokens annually. This unprecedented access would effectively unleash a torrent of compute power, enabling them to conduct extensive research, run complex simulations, iterate rapidly on designs, and explore novel AI applications with unparalleled speed and scale. In this scenario, tokens would not just be an added employment perk; they would be a fundamental tool, directly empowering engineers at Nvidia to conduct deep, computationally intensive research and development for the company.

Huang anticipates that this innovative compensation model will quickly become a benchmark, with other leading tech firms following suit and adopting tokens as a crucial recruiting tool to attract the industry’s most sought-after talent. "It is now one of the recruiting tools in Silicon Valley: how many tokens come along with my job," he asserted. The reasoning behind this is transparent and compelling: "The reason for that is very clear because every engineer that has access to tokens will be more productive." This paradigm shift in compensation underscores the new reality where computational resources are as vital as financial remuneration, directly translating into an engineer’s ability to innovate and deliver groundbreaking results. It marks a profound evolution in how talent is valued and empowered within the burgeoning AI landscape, pushing the boundaries of traditional employment benefits into the realm of digital computational assets.