The rapid, sometimes disorienting pace of technological advancement today, epitomized by drone swarms attacking across borders with chilling impunity, finds striking parallels in historical shifts that irrevocably altered human society. The very genesis of "gunpowder warfare" in the 15th century, marked by the invention of the matchlock gun – the first mechanical firing device – ushered in an era of unprecedented military power and geopolitical restructuring. This innovation wasn’t merely a new tool; it fundamentally changed how conflicts were waged, democratized violence to an extent, and ultimately led to the rise of new empires capable of wielding this destructive force. Fast forward to the 17th century, and the Italian physicist Giovanni Borelli, a pioneer in biomechanics, articulated a vision of machines driven by pulleys mimicking animal actions, a prescient glimpse into a future where artificial mechanisms would extend human capabilities. Today, his visionary concepts resonate deeply as figures like Elon Musk articulate ambitions for robots so intelligent they could manage household chores or even perform complex surgical procedures, transforming daily life and specialized professions alike.

This interplay between immediate, transformative innovation and deep historical roots forms the intellectual core of the "Calculating Empires" exhibition, a monumental 24-meter-long mural currently captivating audiences at the Design Museum in Barcelona. The artwork itself serves as a powerful visual metaphor, portraying technological development not as a linear progression but as a complex tapestry, simultaneously unfolding with the frenetic energy of Everything, Everywhere All at Once and the deliberate, often unseen machinations of Slow Horses. It traces an expansive journey from foundational communication technologies like the printing press to the contemporary proliferation of deepfakes, from the ancient Peruvian quipu – an intricate system of knotted ropes used for calculation and record-keeping – to the vast, "planetary scale" data systems that now underpin global commerce and communication. This visual narrative invites viewers to consider the enduring questions of power, control, and societal impact that accompany every major technological leap.

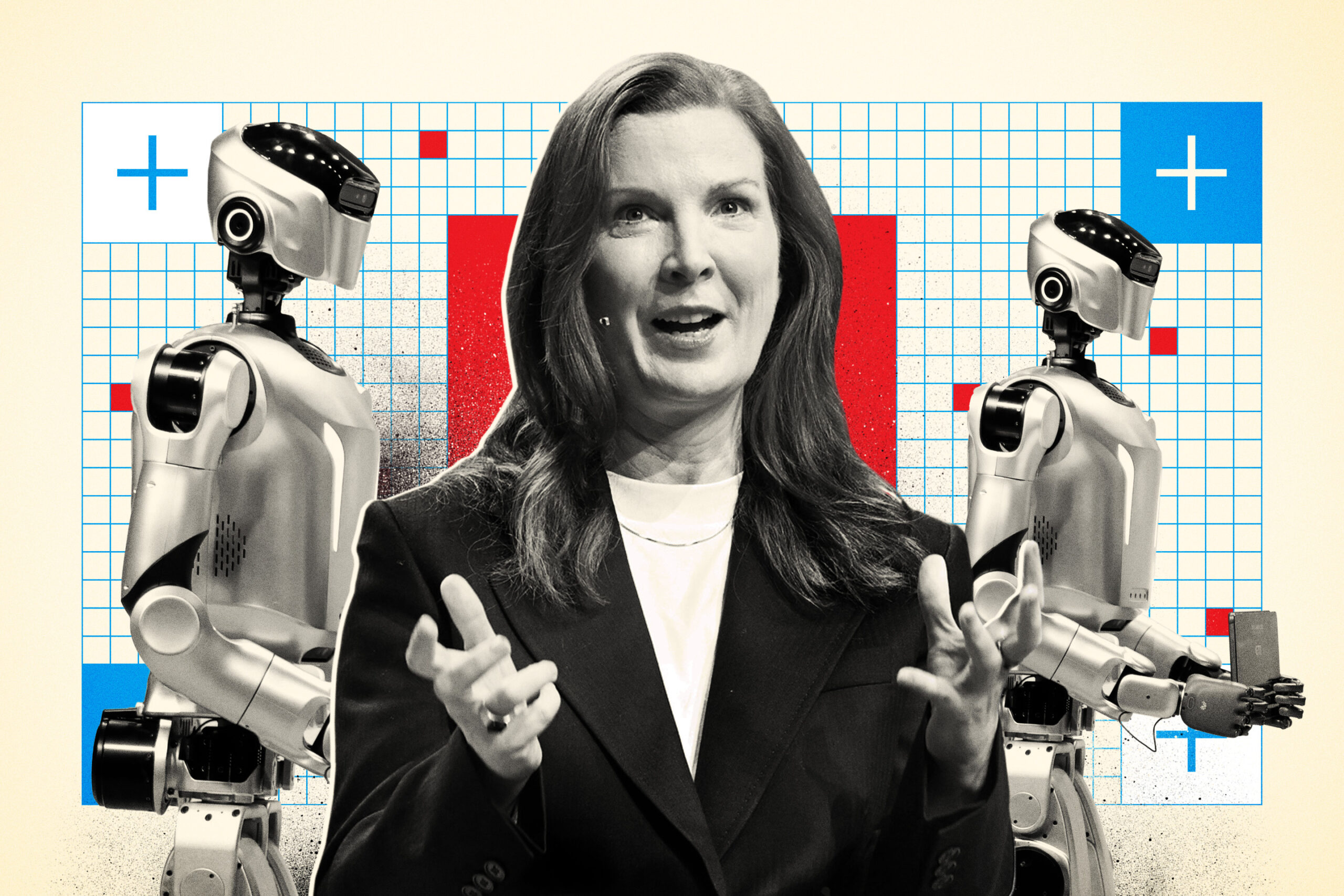

Kate Crawford, a distinguished artificial intelligence research professor at the University of Southern California and co-creator of "Calculating Empires," underscored the mural’s profound relevance during her address at the Mobile World Congress in Barcelona in March. "What I find really interesting is, when people go into this installation, it helps you put this moment in perspective," Crawford explained, highlighting the artwork’s capacity to ground the present in a rich historical context. Crafted over four intensive years in collaboration with visual artist Vladan Joler, the mural is more than just an artistic display; it functions as an urgent call to introspection. It compels every observer to critically examine the unseen architects of our technological future: who is crafting the rules, who is making the critical decisions, and ultimately, who determines what truly matters as we navigate these fundamental shifts in technology.

Crawford elaborated on the prevalent sentiment of "technological presentism," where individuals often feel caught in a maelstrom of unprecedented, rapid change, detached from any historical mooring. "People feel like we’re living in this technological presentism and crazy amount of change," she observed. "So, the ability to step back and say, ‘what have we learned over 500 years?’ [matters]." For Crawford, the creation of the mural was a "transformative project" because it crystallized a crucial insight: "history is not just about technical innovation. It’s about who has the power to set the rules that we will be living within." This distinction is vital, suggesting that technological progress, while seemingly objective, is always embedded within and shaped by existing power structures and human intentions.

This historical lens is particularly critical in the context of "agentic AI," a rapidly evolving field that is currently at a pivotal juncture. "This is why agentic AI is so important right now, because it’s a rapidly evolving field. The standards are not yet set," Crawford emphasized. The conversations unfolding in forums like the Mobile World Congress are not merely academic; they are foundational. "It’s going to be people here, in rooms like this, at places like Mobile World Congress, who are going to have these conversations—what do we want those standards to look like, how do we implement them in our systems, and how do we protect ourselves and our clients?" The stakes, she stressed, could not be higher. "Because this is the big moment to actually make sure that this is a technology that is profoundly useful and helpful and not one that opens up vulnerabilities and attack vectors and new attack surfaces and actually could be cognitively really quite dangerous as well." The potential for misuse, unintended consequences, and even psychological manipulation through advanced AI agents demands proactive, ethical governance.

The Mobile World Congress itself is a phenomenon of immense scale and influence, attracting over 100,000 delegates who navigate eight sprawling, cavernous halls, each brimming with the cutting-edge technologies poised to define the future. Global giants like Huawei and Google, alongside innovators such as Honor and Qualcomm, sponsor colossal pavilions, showcasing remarkable new products that promise seamless integration across our lives – from linking cars to phones, enabling robots to assist disabled individuals, and embedding the internet directly into our eyewear. This vibrant marketplace also serves as a critical arena where governments, eager to project influence and attract investment, jostle for position with the myriad companies vying for dominance in the burgeoning artificial intelligence revolution.

Beyond the flashing neon lights and interactive plasma screens, MWC carves out vital spaces for profound debate. On large stages, the leading minds in the technology world engage in the essential conversations that often get lost amidst the commercial spectacle. The infamous mantra of "Move fast and break things," once championed by Mark Zuckerberg in 2012, now seems a relic of a bygone era. Today, as Crawford and others argue, the stakes are simply too high for such unbridled, consequence-free innovation.

We are, in essence, engaged in a live, global discussion about the very meaning of intelligence itself. Demis Hassabis, the visionary founder of DeepMind, has provocatively suggested that artificial general intelligence (AGI) – a level of AI capable of understanding, learning, and applying intelligence across a wide range of tasks, like a human – could be realized in as little as five years. This projection raises existential questions: In such a world, who, or what, will ultimately make decisions? Is it a matter of "human in the loop," where AI assists but humans retain final authority? Or "human in the lead," where AI systems are subordinate to human strategic direction? Or, disturbingly, "no human needed at all"? These are not hypothetical musings but urgent dilemmas that demand immediate consideration. Mo Gawdat, the former chief business officer at Google, has articulated grave concerns about the risks of a "short-term dystopia," warning that governments, civil society organizations, and regulators are already struggling to control the accelerating effects of machines capable of autonomous learning and decision-making.

Crawford further challenged the conventional understanding of "intelligence," especially when applied to AI. "What do we mean by intelligence?" she queried, prompting a deeper historical reflection. "The history of the term ‘intelligence’ is a troubled one. It’s been used to divide populations, to drive programs about who is valuable and who is not." She pointed out the fundamental flaw in comparing AI agents directly to human intelligence. "We’re trying to compare agents to human intelligence. They’re actually completely different. This [AI intelligence] is statistical probability at scale. These are systems that are following tasks in complex environments." This distinction is crucial, Crawford argued, because it necessitates a different set of investigative questions: "what are agents doing? How can we track that, and how can we better understand the way it’s going to change our own workflows and, much more importantly, how we live?" Understanding AI on its own terms, rather than anthropomorphizing it, is key to effective governance.

The ethical landscape surrounding AI is fraught with tension, particularly concerning the potential military applications of agentic AI. Crawford highlighted ongoing debates between prominent AI developers like OpenAI and Anthropic and entities such as the U.S. Department of War, raising critical questions about the "red lines" for agent use. "Imagine agents in the battlefield," she posited, a scenario that, disturbingly, requires little imagination. Reports from conflicts, such as those in Iran, already suggest the deployment of AI-enabled bombing, operating "at the speed of thought." One of AI’s most potent, and terrifying, functions is "decision compression," dramatically shortening the timeframes between strategic conception and lethal execution. The traditional "kill chain," a multi-step process from target identification to engagement, is being dangerously reduced, potentially leading to unprecedented speeds of conflict. Academic Craig Jones at Newcastle University, speaking to The Guardian, starkly articulated this shift: "You’ve got scale and you’ve got speed, you’re [carrying out the] assassination-style strikes at the same time as you’re decapitating the regime’s ability to respond with all the aerial ballistic missiles. That might have taken days or weeks in historic wars. [Now] you’re doing everything at once." This confluence of speed and scale presents an almost insurmountable challenge to traditional ethical frameworks and oversight mechanisms.

In the face of such rapid, autonomous decision-making, Crawford advocated for "accountability forensics"—the development of robust systems capable of meticulously tracing where decisions are made within complex AI architectures. Currently, she warned, we are witnessing a widespread phenomenon she terms "accountability laundering," where responsibility is deliberately obfuscated. This mirrors the "sloping shoulders syndrome" prevalent in the U.K. civil service, where individuals and departments skillfully dodge responsibility. "We are seeing a type of shell game where [people say] ‘is it the designer [who is responsible]? Is it the deployer? Is it the enterprise client? Is it the end user?’ And everyone can say, ‘well, we don’t really know yet’." Crawford firmly stated, "That’s not going to be acceptable." She predicted a future where regulators will demand "a very strong chain of accountability so you know exactly who is responsible when." This clarity is essential not just for legal recourse but for fostering trust and ensuring ethical development.

Looking ahead, Crawford issued a stark warning regarding privacy. If even a fraction of the predictions and innovations discussed at MWC 2026 materialize, AI agents will soon permeate every facet of our lives. They will possess the capability to read and cache every half-written text, every deleted image, every email left in draft, every video recorded on digitally-enabled glasses, and every private conversation. Such pervasive surveillance, even if unintentional, "upends privacy as we have known it," Crawford cautioned. "We’re at the very beginning of understanding what that looks like." The implications are staggering, demanding not just technical solutions but a fundamental re-evaluation of societal norms, legal frameworks, and individual rights. The conversations surrounding these issues must, therefore, be of profound substance and, crucially, they must happen now, before the technological tide sweeps away our capacity for informed choice and democratic oversight. The echoes of history remind us that while innovation is inevitable, its direction and impact are ultimately shaped by the rules we choose to set.