In the complex landscape of enterprise data, traditional Retrieval Augmented Generation (RAG) pipelines often suffer from a critical flaw: they are meticulously optimized for a singular search behavior, leaving them vulnerable to silent failures when encountering other, equally common, operational demands. A model expertly trained to synthesize comprehensive cross-document reports, for instance, may falter dramatically when tasked with precise, constraint-driven entity searches. Conversely, an agent finely tuned for straightforward lookup tasks can quickly disintegrate when confronted with multi-step reasoning over a sprawling collection of internal, unstructured notes. This pervasive issue often goes undetected until critical operational breakdowns occur, underscoring a significant gap in current enterprise AI capabilities.

Databricks has stepped forward to address this pervasive challenge with the introduction of KARL, an acronym for Knowledge Agents via Reinforcement Learning. This groundbreaking initiative marks a significant leap forward in how enterprises can interact with and derive insights from their vast and diverse data repositories. At its core, KARL represents a paradigm shift: instead of training specialized agents for isolated tasks, Databricks has developed a novel reinforcement learning algorithm capable of training a single agent to proficiently handle six distinct enterprise search behaviors simultaneously. The audacious claim from Databricks is that this unified agent not only rivals the performance of leading models like Claude Opus 4.6 on a purpose-built benchmark, but does so at a remarkably lower cost – a staggering 33% reduction per query – and with a significantly improved latency of 47%. This impressive feat was achieved through a revolutionary training methodology that relied entirely on synthetic data, generated autonomously by the agent itself, completely eliminating the need for costly and time-consuming human labeling. The benchmark used for this comparison, dubbed KARLBench, was meticulously developed by Databricks specifically to rigorously evaluate the multifaceted nature of enterprise search behaviors.

Jonathan Frankle, Chief AI Scientist at Databricks, elaborated on the unique challenges of this endeavor in an exclusive interview with VentureBeat. He highlighted a key distinction between the tasks KARL is designed to tackle and the verifiable, often binary, tasks that have seen significant reinforcement learning successes in the broader AI community. "A lot of the big reinforcement learning wins that we’ve seen in the community in the past year have been on verifiable tasks where there is a right and a wrong answer," Frankle explained. "The tasks that we’re working on for KARL, and that are just normal for most enterprises, are not strictly verifiable in that same way." This distinction is crucial; enterprise knowledge work frequently involves nuance, interpretation, and synthesis, where definitive "right" or "wrong" answers are elusive.

The array of tasks that KARL is engineered to master includes synthesizing intelligence across disparate product manager meeting notes, reconstructing the intricate details of competitive deal outcomes from fragmented customer records, answering complex questions about account history where no single document holds the complete narrative, and generating comprehensive battle cards from a chaotic tapestry of unstructured internal data. Each of these scenarios presents a unique challenge because they lack a single, universally correct answer that an automated system can unequivocally verify. "Doing reinforcement learning in a world where you don’t have a strict right and wrong answer, and figuring out how to guide the process and make sure reward hacking doesn’t happen – that’s really non-trivial," Frankle emphasized. "Very little of what companies do day to day on knowledge tasks are verifiable." This inherent ambiguity necessitates a more sophisticated approach to AI training, one that can learn to navigate uncertainty and make reasoned judgments rather than simply identifying pre-defined correct outputs.

The Generalization Trap in Enterprise RAG

The fundamental limitation of standard RAG architectures becomes starkly apparent when faced with ambiguous, multi-step queries that draw upon fragmented internal data – data that was, in many cases, never originally designed or structured for direct querying. This "generalization trap" is precisely what KARL aims to overcome.

To thoroughly assess KARL’s capabilities, Databricks meticulously constructed the KARLBench benchmark. This benchmark is designed to measure performance across a diverse spectrum of six critical enterprise search behaviors: constraint-driven entity search, which involves identifying specific entities based on complex criteria; cross-document report synthesis, the ability to consolidate information from multiple sources into a coherent report; long-document traversal with tabular numerical reasoning, requiring the agent to navigate lengthy documents and perform calculations on embedded tables; exhaustive entity retrieval, ensuring all relevant entities are found; procedural reasoning over technical documentation, understanding and applying steps outlined in technical manuals; and fact aggregation over internal company notes, the ability to piece together facts from informal records. The final task, specifically named PMBench, was developed using Databricks’ own internal product manager meeting notes – a dataset notoriously fragmented, ambiguous, and unstructured, presenting a formidable challenge for even the most advanced frontier models.

The research underpinning KARL demonstrated a critical insight: training an agent on any single one of these tasks and then testing it on the others yielded consistently poor results, highlighting the inadequacy of single-task optimization. In stark contrast, the KARL paper reveals that multi-task reinforcement learning fosters a profound level of generalization. The Databricks team found that when KARL was trained on synthetic data for just two of the six tasks, it exhibited remarkable proficiency across all four of the tasks it had never encountered during its training phase. This capacity for generalization is a game-changer for enterprises dealing with a constantly evolving and unpredictable data environment.

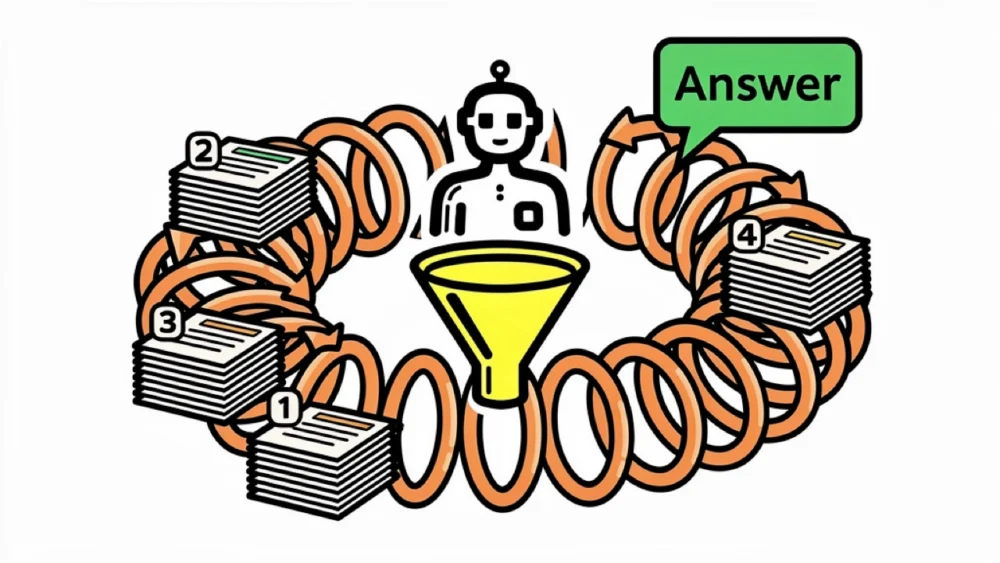

Consider the practical example of building a competitive battle card for a financial services customer. This task is far from a simple information retrieval exercise. The agent must first identify all relevant customer accounts, then meticulously filter these accounts based on recency, reconstruct the history of past competitive deals involving these accounts, and critically, infer the likely outcomes of those deals. Crucially, none of this specific, inferential information is explicitly labeled anywhere within the raw data. It requires a deep understanding and synthesis of fragmented pieces of information, a capability that KARL has been designed to excel at. Frankle aptly describes KARL’s process as "grounded reasoning" – the execution of complex reasoning chains while ensuring that every single step is firmly anchored in retrieved factual evidence. He likens this to an advanced form of RAG, extending the concept exponentially: "You can think of this as RAG, but like RAG plus plus plus plus plus plus, all the way up to 200 vector database calls." This emphasizes the iterative, deeply analytical nature of KARL’s information processing.

The RL Engine: Why OAPL Matters

The sophisticated training of KARL is powered by a novel reinforcement learning algorithm known as OAPL, which stands for Optimal Advantage-based Policy Optimization with Lagged Inference policy. This cutting-edge approach, jointly developed by researchers from Cornell University, Databricks, and Harvard University, was detailed in a separate research paper published the week prior to KARL’s announcement.

Traditional reinforcement learning for large language models (LLMs) often relies on on-policy algorithms, such as GRPO (Group Relative Policy Optimization). These algorithms operate under the assumption that the model generating the training data and the model undergoing updates are synchronized. However, in the context of distributed training, which is essential for handling the scale of modern AI development, this synchronization is rarely, if ever, achieved. Previous methods attempted to mitigate this discrepancy using importance sampling, a technique that, while effective to a degree, often introduced significant variance and instability into the training process. OAPL takes a fundamentally different approach. It embraces the inherent off-policy nature of distributed training, employing a regression objective that maintains stability even with policy lags extending beyond 400 gradient steps – a staggering 100 times more off-policy than prior methodologies could effectively handle. The efficacy of OAPL is underscored by its performance in code generation experiments, where it achieved comparable results to a GRPO-trained model using approximately one-third of the training samples.

The exceptional sample efficiency of OAPL is a critical factor in making the training budget for models like KARL financially accessible for enterprises. By intelligently reusing previously collected "rollouts" (sequences of actions and their outcomes) rather than demanding fresh, on-policy data for every single update, the entire training run for KARL was completed within a few thousand GPU hours. This efficiency transforms what might otherwise be an insurmountable research project into a realistically achievable endeavor for enterprise development teams.

Agents, Memory, and the Context Stack

In recent months, the industry has seen a burgeoning discussion surrounding the potential for contextual memory, often referred to as agentic memory, to supplant traditional RAG architectures. Frankle, however, views this not as an either/or proposition but rather as a layered, integrated system. At the foundational level lies the vector database, containing millions of entries that are simply too vast to fit directly into an LLM’s context window. At the apex sits the LLM’s context window itself, a limited but crucial space for immediate processing. Situated between these two layers are emerging compression and caching mechanisms, which play a pivotal role in determining how much of an agent’s accumulated knowledge and past interactions can be effectively carried forward.

For KARL, this layered approach is not merely theoretical; it is integral to its operational design. Certain tasks within the KARLBench suite, for example, necessitated up to 200 sequential vector database queries. During these complex operations, the agent iteratively refines its searches, meticulously verifies details across documents, and cross-references information before committing to a final answer. This process repeatedly exhausts the LLM’s context window. Instead of relying on a separate, pre-trained summarization model, the Databricks team empowered KARL to learn context compression end-to-end through reinforcement learning. When the context grew too large, the agent autonomously compressed it and continued its task, with the ultimate reward signal at the completion of the task serving as the sole training indicator. The impact of this learned compression was dramatic: removing it caused accuracy on one specific benchmark to plummet from 57% to a mere 39%. "We just let the model figure out how to compress its own context," Frankle stated. "And this worked phenomenally well." This self-optimization capability is a testament to KARL’s advanced learning architecture.

Where KARL Falls Short

Despite its impressive capabilities, Frankle was candid about KARL’s limitations and failure modes. The agent encounters its greatest challenges when dealing with questions characterized by significant ambiguity, particularly those where multiple valid answers exist, and the model struggles to discern whether the question is genuinely open-ended or simply difficult to answer due to incomplete information. This critical judgment call remains an unsolved problem in current AI development.

The model also exhibits a tendency, described by Frankle as "giving up early" on certain queries, meaning it ceases its information-gathering process before producing a definitive final answer. He pushed back against framing this as a pure failure, however, noting that the most computationally expensive queries are often those the model is most likely to get wrong. In such scenarios, halting the process and avoiding an incorrect answer can, in fact, be the most judicious and efficient course of action.

Furthermore, KARL’s current capabilities are exclusively confined to vector search. Tasks that necessitate SQL queries, file system searches, or Python-based calculations are not yet within its operational scope. Frankle indicated that these functionalities are high on the development roadmap and represent the next frontier for KARL, but they are not part of the current system’s architecture.

What This Means for Enterprise Data Teams

The unveiling of KARL presents enterprise data teams with three pivotal decisions that warrant careful reconsideration as they evaluate and evolve their retrieval infrastructures.

Firstly, the pipeline architecture itself is called into question. If an organization’s existing RAG agent is optimized for only one specific search behavior, the findings from the KARL initiative strongly suggest that it is likely failing to effectively address other critical operational needs. The KARL results underscore the significant advantages of multi-task training across a diverse range of retrieval behaviors, leading to models that exhibit superior generalization. In contrast, narrowly defined, single-purpose pipelines are demonstrably less adaptable and robust.

Secondly, the critical importance of reinforcement learning (RL) in this context is not merely a training detail but a fundamental differentiator. Databricks rigorously tested an alternative approach: distilling knowledge from expert models through supervised fine-tuning. While this method did improve in-distribution performance (performance on tasks similar to those seen during training), it yielded negligible gains on tasks the model had never encountered. RL, on the other hand, fostered the development of general search behaviors that readily transferred to novel situations. For enterprise teams grappling with heterogeneous data sources and unpredictable query types, this capacity for generalization is not just an advantage; it is the entire game.

Thirdly, the practical implications of RL efficiency are profound. A model trained to excel at search, like KARL, demonstrates its efficiency through tangible behaviors: it completes tasks in fewer, more targeted steps; it intelligently stops earlier on queries it cannot confidently answer; it diversifies its search strategies rather than repeatedly executing failed queries; and it adeptly compresses its own context to avoid running out of processing space. The argument for developing purpose-built search agents, rather than routing every query through general-purpose frontier APIs, transcends mere cost considerations. It is fundamentally about cultivating a model that possesses a deep, nuanced understanding of how to effectively perform its designated job, adapting and learning in ways that generic models cannot replicate.