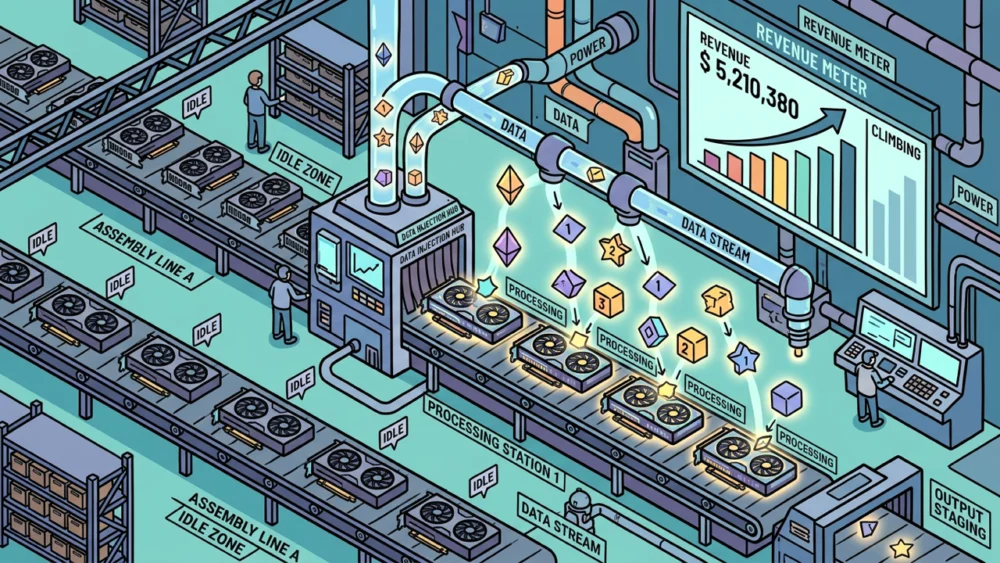

Every GPU cluster, whether powering cutting-edge research or enterprise-grade applications, experiences periods of inactivity – what the industry terms "dead time." These are the moments when training jobs conclude, workloads pivot, or scheduled maintenance occurs, leaving powerful hardware sitting dormant. During these interludes, the substantial costs associated with maintaining these GPU resources, primarily electricity consumption and cooling infrastructure, continue to accrue. For operators of these specialized "neoclouds" – dedicated or highly optimized GPU infrastructure providers – these empty cycles translate directly into lost profit margins, a significant drain on profitability.

The conventional approach to mitigating this issue has been the utilization of spot GPU markets. These platforms allow cloud vendors to rent out their surplus capacity to a broader pool of users seeking on-demand access to GPUs. However, this model still places the cloud vendor as the intermediary, retaining a significant portion of the revenue. Furthermore, engineers procuring capacity through these spot markets are typically purchasing raw compute power. This means they are acquiring the fundamental hardware without the crucial, pre-configured software stacks necessary for efficient AI inference – the process of using trained AI models to generate predictions or outputs. This absence of an "inference stack" often necessitates additional configuration and optimization by the end-user, adding complexity and cost.

FriendliAI, a company founded by Byung-Gon Chun, a distinguished researcher whose seminal work laid the groundwork for the widely adopted vLLM open-source inference engine, offers a fundamentally different solution. Their approach centers on directly running AI inference workloads on the very unused hardware that would otherwise be idle. This strategy is meticulously optimized for "token throughput" – a key metric measuring how many tokens (units of text or data) an AI model can process per unit of time. Critically, FriendliAI proposes a revenue-sharing model, splitting the income generated from these inference jobs with the neocloud operator.

Chun’s academic background is deeply rooted in the efficient execution of machine learning models at scale. For over a decade, he served as a professor at Seoul National University, where his research delved into optimizing the computational demands of AI. This dedication culminated in a pivotal research paper, "Orca," presented at the USENIX Symposium on Operating Systems Design and Implementation (OSDI) in 2022. The "Orca" paper introduced the groundbreaking concept of "continuous batching." This innovative technique revolutionizes inference processing by dynamically handling incoming requests rather than waiting to accumulate a fixed-size batch of requests before execution. This dynamic approach significantly reduces latency and maximizes GPU utilization. Today, continuous batching has become an industry standard and is the core mechanism underpinning the performance of vLLM, the dominant open-source inference engine powering the vast majority of production AI deployments.

This week, FriendliAI is launching a new platform designed to bring this revenue-generating potential to a wider audience: InferenceSense. The platform draws a compelling parallel to Google AdSense, a widely recognized tool that enables website publishers to monetize their unsold advertising inventory. Similarly, neocloud operators can now leverage InferenceSense to fill their underutilized GPU cycles with lucrative AI inference workloads. By doing so, they can effectively capture a share of the revenue generated from processing tokens. A fundamental tenet of InferenceSense is that the operator’s own critical workloads always take precedence. The moment a resource scheduler reclaims a GPU for a primary task, InferenceSense seamlessly and instantaneously yields the hardware.

"What we are providing is that instead of letting GPUs be idle, by running inferences they can monetize those idle GPUs," Chun explained to VentureBeat, underscoring the core value proposition of the platform. "This transforms a cost center into a revenue-generating asset."

From Seoul National University Lab to the Engine Behind vLLM

FriendliAI was established in 2021, a period when the broader AI industry was still largely focused on model training, with inference receiving comparatively less attention. The company’s flagship product is a dedicated inference endpoint service tailored for AI startups and enterprises that utilize open-weight models. These open-weight models, freely available for use and modification, represent a significant and growing segment of the AI landscape. FriendliAI has also established a presence on Hugging Face, a prominent platform for AI model sharing and deployment, appearing as a deployment option alongside major cloud providers like Azure, AWS, and GCP. The platform currently boasts support for an extensive catalog of over 500,000 open-weight models hosted on Hugging Face, demonstrating its broad compatibility and reach.

The newly launched InferenceSense platform extends this powerful inference engine capability to address the critical capacity management challenge faced by GPU operators between their primary workloads. It provides a direct solution for the "dead time" problem, turning idle resources into a source of income.

The Mechanics of InferenceSense: Seamless Integration and Optimized Performance

InferenceSense is designed for seamless integration with existing infrastructure, operating on top of Kubernetes. Kubernetes, a widely adopted open-source system for automating deployment, scaling, and management of containerized applications, is already a staple for most neocloud operators in their resource orchestration strategies. The process begins with the operator allocating a dedicated pool of GPUs to a Kubernetes cluster managed by FriendliAI. This allocation involves declaring which specific nodes are available for InferenceSense and under what precise conditions these GPUs can be reclaimed by the operator’s primary scheduler. The critical function of idle detection is managed efficiently through Kubernetes itself, ensuring that resources are only utilized by InferenceSense when truly available.

"We have our own orchestrator that runs on the GPUs of these neocloud – or just cloud – vendors," Chun elaborated. "We definitely take advantage of Kubernetes, but the software running on top is a really highly optimized inference stack." This hybrid approach leverages the robust orchestration capabilities of Kubernetes while introducing FriendliAI’s specialized, high-performance inference software.

When GPUs are detected as unused by the Kubernetes system, InferenceSense dynamically spins up isolated containers. These containers are then utilized to serve paid AI inference workloads. The platform supports a diverse range of popular open-weight models, including but not limited to DeepSeek, Qwen, Kimi, GLM, and MiniMax, catering to a broad spectrum of AI applications. The crucial aspect of this operation is its preemptible nature. The moment the operator’s primary scheduler requires the hardware back – perhaps for a high-priority training job or a critical inference task – the InferenceSense inference workloads are immediately halted, and the GPUs are promptly returned. FriendliAI asserts that this seamless handoff occurs within mere seconds, ensuring minimal disruption to the operator’s core operations.

The demand for inference services is aggregated through a dual-channel approach. FriendliAI directly engages its own clients who require inference capacity. Additionally, the platform collaborates with inference aggregators, such as OpenRouter, which consolidate demand from multiple sources. In this model, the neocloud operator’s primary contribution is providing the physical GPU capacity. FriendliAI takes on the responsibility of managing the entire demand pipeline, optimizing the AI models for efficient inference, and providing the sophisticated serving stack. This comprehensive service is offered with no upfront fees and no minimum commitment periods, making it an accessible and low-risk proposition for operators. A real-time dashboard provides operators with complete transparency, offering insights into which models are currently running, the volume of tokens being processed, and the revenue that has been accrued.

The Token Throughput Advantage: Beyond Raw Capacity Rental

The distinction between FriendliAI’s InferenceSense and traditional spot GPU markets is fundamental. Providers like CoreWeave, Lambda Labs, and RunPod, while offering valuable services, primarily operate by renting out their own hardware to third parties. InferenceSense, conversely, leverages hardware that the neocloud operator already owns and manages. The operator retains complete control, defining precisely which nodes participate in the InferenceSense program and establishing clear scheduling agreements with FriendliAI in advance. This is a critical difference: while spot markets focus on monetizing raw capacity, InferenceSense is engineered to monetize tokens processed through inference.

The key metric that dictates how much InferenceSense can earn during these unused GPU windows is token throughput per GPU-hour. FriendliAI makes a bold claim: its proprietary inference engine delivers two to three times the token throughput of a standard vLLM deployment. Chun carefully qualifies this figure, noting that the precise performance gains can vary depending on the specific workload type and the characteristics of the AI model being served.

The underlying technology driving this superior performance is FriendliAI’s custom-built inference stack. In contrast to most competing inference solutions, which are often built upon Python-based open-source frameworks, FriendliAI’s engine is meticulously engineered in C++. This low-level language choice allows for finer-grained control and optimization. Furthermore, it utilizes custom GPU kernels, eschewing reliance on Nvidia’s widely used cuDNN library. This custom approach enables FriendliAI to tailor kernel operations precisely to their inference engine’s needs. The company has also developed its own model representation layer, a sophisticated component responsible for partitioning and executing AI models efficiently across heterogeneous hardware. This layer incorporates custom implementations of advanced techniques such as speculative decoding (which uses a smaller, faster model to predict tokens, which are then verified by a larger model), quantization (reducing the precision of model weights to decrease memory footprint and computation), and KV-cache management (optimizing the storage and retrieval of key-value pairs in transformer models).

Because FriendliAI’s engine is capable of processing a significantly higher number of tokens per GPU-hour compared to a standard vLLM stack, operators are positioned to generate more revenue from each hour of unused GPU capacity. This economic advantage directly translates into a more profitable utilization of their infrastructure investments.

Strategic Implications for AI Engineers and the Future of Inference Costs

For AI engineers tasked with evaluating and managing inference costs, the decision between utilizing neocloud providers and hyperscale cloud platforms has historically been a pragmatic one, largely driven by considerations of price and availability. InferenceSense introduces a compelling new factor into this equation. By enabling neoclouds to effectively monetize their idle GPU capacity through inference workloads, the platform creates a stronger economic incentive for these operators to maintain competitive token pricing.

While this development may not necessitate immediate shifts in existing infrastructure decisions – the ecosystem is still in its nascent stages – it represents a significant trend to monitor. Engineers meticulously tracking their total inference expenditure should pay close attention to whether the widespread adoption of platforms like InferenceSense by neocloud providers begins to exert downward pressure on the API pricing for popular models such as DeepSeek and Qwen over the coming 12 to 18 months.

"When we have more efficient suppliers, the overall cost will go down," Chun stated, articulating a fundamental economic principle. "With InferenceSense we can contribute to making those models cheaper." This suggests a future where increased efficiency in GPU utilization and inference processing, driven by innovative solutions like InferenceSense, could lead to more accessible and affordable AI inference services for the entire industry. The impact could ripple outwards, democratizing access to advanced AI capabilities and accelerating innovation across a multitude of sectors. The ability to turn dormant hardware into a dynamic revenue stream not only benefits the neocloud operators but also has the potential to lower the barrier to entry for AI development and deployment, fostering a more vibrant and competitive AI landscape.