Large language models (LLMs), the current titans of artificial intelligence, are encountering significant limitations when tasked with domains that demand a profound understanding of the physical world. From the intricate ballet of robotics and the high-stakes precision of autonomous driving to the complex choreography of manufacturing, LLMs, despite their impressive linguistic prowess, are proving inadequate. This fundamental constraint is dramatically reshaping the investment landscape, steering venture capital towards "world models" – AI systems designed to internalize and simulate the physical universe. This paradigm shift is evidenced by the staggering recent funding rounds: AMI Labs has successfully raised a $1.03 billion seed round, following closely on the heels of World Labs securing a colossal $1 billion investment.

At their core, LLMs excel at processing and generating abstract knowledge through a sophisticated form of next-token prediction. They can weave coherent narratives, answer complex questions, and even generate creative text by predicting the most probable sequence of words. However, this very strength betrays their fundamental weakness: a lack of grounding in physical causality. They operate in a realm of statistical relationships between words, not in a world governed by Newton’s laws or the nuances of material properties. Consequently, they cannot reliably predict the physical consequences of real-world actions, making them ill-suited for tasks requiring an intuitive grasp of physics, spatial reasoning, or cause-and-effect in tangible environments.

This growing awareness of LLM limitations is being amplified by prominent AI researchers and industry leaders. As the industry endeavors to transition AI from the confines of web browsers and digital interfaces into the tangible, physical spaces of our lives, these limitations become starkly apparent. In a candid interview with podcaster Dwarkesh Patel, Turing Award laureate Richard Sutton articulated a critical concern: LLMs, he warned, primarily mimic human speech rather than truly modeling the world. This mimicry, while impressive, fundamentally limits their capacity to learn from genuine experience and adapt dynamically to the ever-changing conditions of the real world. This inherent inability to learn from interaction and adapt to novel situations is precisely what hinders their application in complex, dynamic physical systems.

The ramifications of this limitation are already visible in existing AI models. Systems built upon LLM foundations, including sophisticated vision-language models (VLMs) that attempt to bridge the gap between visual perception and linguistic understanding, often exhibit brittle behavior. These models can falter and fail when confronted with even minor alterations to their input, demonstrating a lack of robust understanding of the underlying physical realities they are attempting to process. A slight change in lighting, a subtle shift in object orientation, or an unexpected occlusion can easily lead to erroneous predictions or outright system failure, highlighting their superficial grasp of the physical scene.

Echoing these concerns, Demis Hassabis, the CEO of Google DeepMind, has pointed to a prevalent issue in contemporary AI models: "jagged intelligence." In a discussion with the same podcaster, Hassabis elaborated that while these models can achieve remarkable feats, such as solving intricate mathematics olympiads, they often stumble on elementary physics problems. This dichotomy, he explained, arises from a critical deficit in capabilities related to real-world dynamics and physical interactions. They possess advanced abstract reasoning but lack the intuitive, embodied understanding that humans develop through constant engagement with the physical environment.

To surmount this formidable challenge, the AI research community is increasingly focusing on the development of "world models." These models are conceptualized as internal simulators, providing AI systems with a safe and controlled environment to test hypotheses and predict the outcomes of potential actions before they are executed in the physical world. This internal simulation capability is crucial for enabling AI agents to learn, plan, and act with a degree of foresight and safety. However, it’s important to recognize that "world models" is an umbrella term, encompassing a diverse array of distinct architectural approaches, each with its own strengths, weaknesses, and optimal use cases. These approaches are coalescing into three primary architectural paradigms, each offering unique tradeoffs.

JEPA: Engineered for Real-Time Responsiveness

The first significant architectural approach centers on learning abstract, or "latent," representations of the world, rather than attempting to predict its dynamics at the granular pixel level. This methodology, strongly advocated by AMI Labs, is deeply rooted in the principles of the Joint Embedding Predictive Architecture (JEPA). JEPA models are designed to emulate human cognitive processes. When humans observe the world, we don’t meticulously process and memorize every single pixel or inconsequential detail within a scene. Instead, our brains extract the salient information, focusing on the core relationships and dynamics. For instance, observing a car driving down a street, we track its trajectory, speed, and intent, rather than calculating the precise light reflections on every leaf of the surrounding trees.

JEPA models strive to replicate this human cognitive efficiency. Rather than forcing a neural network to predict the exact visual appearance of the next frame in a video, these models learn a compressed set of abstract features. They adeptly discard irrelevant details, such as background clutter or minor fluctuations, and instead concentrate on the fundamental rules governing the interactions of key elements within a scene. This focus on abstract representations makes JEPA models inherently more robust to background noise, minor occlusions, and subtle variations in input that would typically cause other models to falter.

The computational and memory efficiency of this architecture is a significant advantage. By abstracting away superfluous information, JEPA models require considerably fewer training examples and operate with significantly lower latency. These characteristics render them exceptionally well-suited for applications where efficiency and real-time inference are paramount, including robotics, autonomous driving, and high-stakes enterprise workflows that demand immediate and reliable decision-making. As an illustration of its practical application, AMI is collaborating with healthcare company Nabla to leverage this architecture. Their goal is to simulate complex operational scenarios within healthcare settings, thereby reducing cognitive load and improving efficiency in fast-paced medical environments. Yann LeCun, a pioneer of the JEPA architecture and a co-founder of AMI, has emphasized that world models based on JEPA are intentionally designed to be "controllable in the sense that you can give them goals, and by construction, the only thing they can do is accomplish those goals." This inherent controllability, coupled with their efficiency, makes them a compelling choice for a wide range of practical AI applications.

Gaussian Splats: Sculpting the Spatial Universe

A second prominent approach to building world models harnesses the power of generative models to construct complete spatial environments from the ground up. This method, adopted by companies like World Labs, begins with an initial prompt, which can be an image or a textual description. A sophisticated generative model then uses this prompt to create a three-dimensional representation known as a "Gaussian splat." A Gaussian splat is a cutting-edge technique for representing 3D scenes using millions of tiny, mathematically defined particles that collectively define the geometry, texture, and lighting of the environment. Unlike traditional 2D video generation, these 3D representations can be directly imported into standard physics and 3D engines, such as Unreal Engine. This allows users and other AI agents to navigate and interact with these environments freely, from any vantage point, fostering a truly immersive and interactive experience.

The primary benefit of this approach lies in its ability to drastically reduce the time and upfront generation costs associated with creating complex, interactive 3D environments. This directly addresses a critical challenge highlighted by World Labs founder Fei-Fei Li. She aptly described LLMs as "wordsmiths in the dark," possessing eloquent language but lacking the crucial spatial intelligence and physical experience necessary for real-world understanding. World Labs’ Marble model aims to imbue AI with this vital spatial awareness, enabling it to perceive, reason about, and interact with the three-dimensional world in a more intuitive manner.

While this Gaussian splat-based approach is not optimized for split-second, real-time execution like JEPA models, its potential is immense across several domains. It holds significant promise for spatial computing, interactive entertainment, industrial design, and the creation of static yet highly realistic training environments for robotics. The commercial value of this technology is underscored by the substantial backing from industry giants. Autodesk, a leader in industrial design software, has invested heavily in World Labs, signaling its intent to integrate these advanced world models into its design applications, thereby revolutionizing how engineers and designers create and interact with complex 3D models.

End-to-End Generation: Mastering Scale and Dynamics

The third distinct architectural approach employs an end-to-end generative model that processes prompts and user actions in a continuous loop, dynamically generating the scene, its physical dynamics, and the resulting reactions on the fly. Instead of exporting a static 3D file to an external physics engine, the model itself serves as the engine. It ingests an initial prompt alongside a continuous stream of user actions, and in real-time, it generates subsequent frames of the environment. This process inherently calculates physics, lighting, and object reactions natively within the model’s architecture.

Notable examples of this approach include Google DeepMind’s Genie 3 and Nvidia’s Cosmos. These models offer a remarkably simple interface for generating an almost infinite array of interactive experiences and producing massive volumes of synthetic data. DeepMind has demonstrated the capabilities of Genie 3 by showcasing its ability to maintain strict object permanence and consistent physics at a smooth 24 frames per second, all without relying on a separate memory module. This native integration of generation and simulation allows for highly dynamic and responsive environments.

This end-to-end generative methodology directly translates into powerful synthetic data generation pipelines. Nvidia Cosmos, for instance, leverages this architecture to scale the creation of synthetic data and enhance physical AI reasoning. This capability is invaluable for developers of autonomous vehicles and robotics, enabling them to synthesize rare, dangerous, or challenging edge-case conditions for training their AI systems, all without the prohibitive cost or inherent risks associated with physical testing. Waymo, another Alphabet subsidiary, has built its world model on top of DeepMind’s Genie 3, adapting it specifically for the rigorous training requirements of its self-driving cars.

The primary drawback of this end-to-end generative method is the substantial computational cost associated with continuously rendering both physics and visual elements simultaneously. However, as Demis Hassabis has argued, this investment is essential to realize the ultimate vision of AI that possesses a deep, internal understanding of physical causality. He posits that current AI systems are fundamentally lacking critical capabilities necessary to operate safely and effectively in the real world, and achieving this deeper understanding necessitates pushing the boundaries of computational power and model architecture.

The Horizon: Hybrid Architectures and Future Convergence

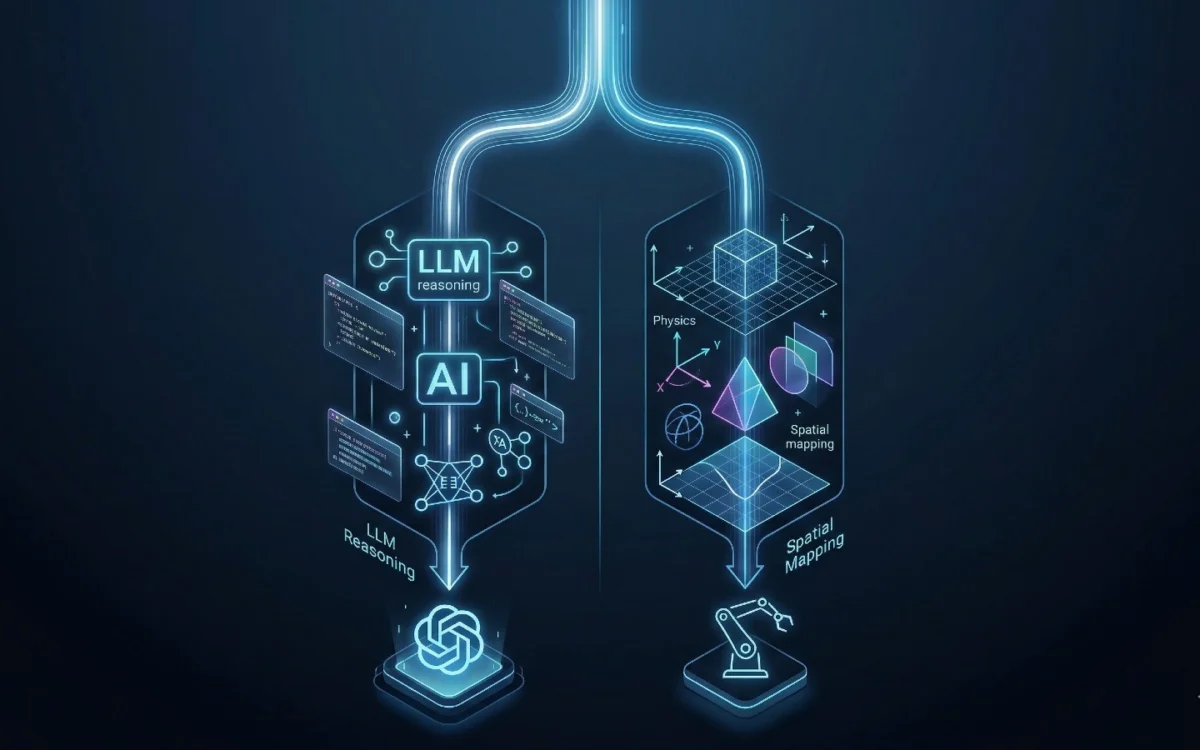

Looking ahead, it is clear that LLMs will continue to play a vital role, primarily serving as the reasoning and communication interface for AI systems. They will act as the translators between human intent and the world model’s understanding of physical reality. However, world models are rapidly positioning themselves as the foundational infrastructure for physical and spatial data pipelines, underpinning the next generation of AI applications. As these underlying models continue to mature and evolve, we are witnessing the emergence of sophisticated hybrid architectures that ingeniously combine the strengths of each distinct approach.

A compelling example of this trend is the work of cybersecurity startup DeepTempo, which has developed LogLM. This innovative model integrates elements from both LLMs and JEPA. By doing so, it can effectively detect anomalies and cyber threats by analyzing security and network logs, demonstrating the power of combining abstract reasoning with a more grounded understanding of sequential data and its potential real-world implications. This fusion of capabilities signifies a promising direction for AI development, where specialized architectures are not viewed as mutually exclusive but rather as complementary components within a more comprehensive intelligent system. The future of AI in physical domains likely lies in these intelligent combinations, where the linguistic fluency of LLMs is augmented by the embodied understanding of world models, paving the way for AI that can not only comprehend but also effectively and safely interact with the physical world.